# Resume a specific session by ID (-r)

# Verbose mode (debug output)

hermes chat --verbose

# Isolated git worktree (for running multiple agents in parallel)

hermes -w # Interactive mode in worktree

hermes -w -q "Fix issue #123" # Single query in worktree

```

## Interface Layout

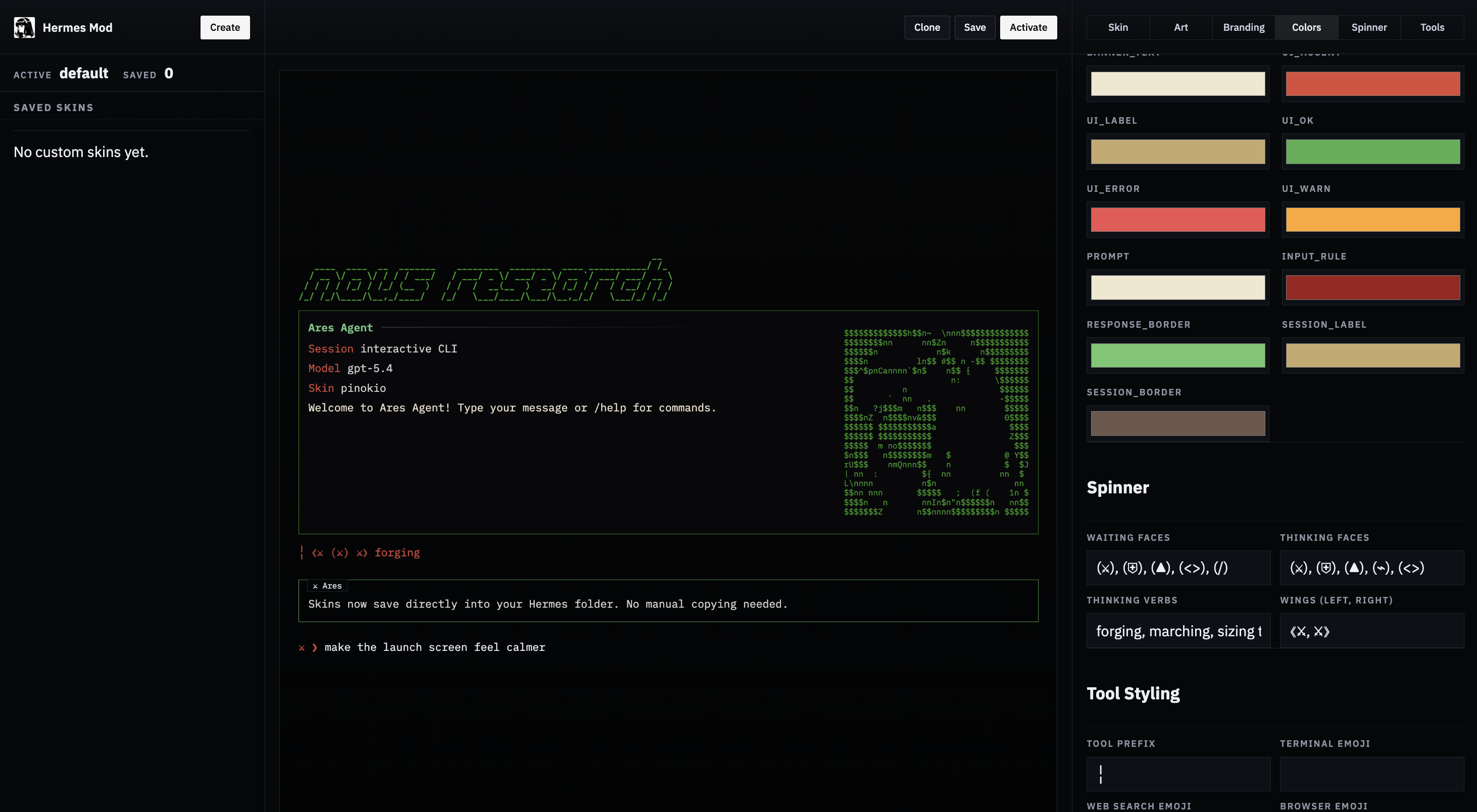

The Hermes CLI banner, conversation stream, and fixed input prompt rendered as a stable docs figure instead of fragile text art.

The welcome banner shows your model, terminal backend, working directory, available tools, and installed skills at a glance.

### Status Bar

A persistent status bar sits above the input area, updating in real time:

```

⚕ claude-sonnet-4-20250514 │ 12.4K/200K │ [██████░░░░] 6% │ $0.06 │ 15m

```

| Element | Description |

|---------|-------------|

| Model name | Current model (truncated if longer than 26 chars) |

| Token count | Context tokens used / max context window |

| Context bar | Visual fill indicator with color-coded thresholds |

| Cost | Estimated session cost (or `n/a` for unknown/zero-priced models) |

| Duration | Elapsed session time |

The bar adapts to terminal width — full layout at ≥ 76 columns, compact at 52–75, minimal (model + duration only) below 52.

**Context color coding:**

| Color | Threshold | Meaning |

|-------|-----------|---------|

| Green | < 50% | Plenty of room |

| Yellow | 50–80% | Getting full |

| Orange | 80–95% | Approaching limit |

| Red | ≥ 95% | Near overflow — consider `/compress` |

Use `/usage` for a detailed breakdown including per-category costs (input vs output tokens).

### Session Resume Display

When resuming a previous session (`hermes -c` or `hermes --resume `), a "Previous Conversation" panel appears between the banner and the input prompt, showing a compact recap of the conversation history. See [Sessions — Conversation Recap on Resume](sessions.md#conversation-recap-on-resume) for details and configuration.

## Keybindings

| Key | Action |

|-----|--------|

| `Enter` | Send message |

| `Alt+Enter` or `Ctrl+J` | New line (multi-line input) |

| `Alt+V` | Paste an image from the clipboard when supported by the terminal |

| `Ctrl+V` | Paste text and opportunistically attach clipboard images |

| `Ctrl+B` | Start/stop voice recording when voice mode is enabled (`voice.record_key`, default: `ctrl+b`) |

| `Ctrl+G` | Open the current input buffer in `$EDITOR` (vim/nvim/nano/VS Code/etc.). Save and quit to send the edited text as the next prompt — ideal for long, multi-paragraph prompts. |

| `Ctrl+X Ctrl+E` | Emacs-style alternate binding for the external editor (same behavior as `Ctrl+G`). |

| `Ctrl+C` | Interrupt agent (double-press within 2s to force exit) |

| `Ctrl+D` | Exit |

| `Ctrl+Z` | Suspend Hermes to background (Unix only). Run `fg` in the shell to resume. |

| `Tab` | Accept auto-suggestion (ghost text) or autocomplete slash commands |

**Multiline paste preview.** When you paste a multi-line block, the CLI echoes a compact single-line preview (`[pasted: 47 lines, 1,842 chars — press Enter to send]`) instead of dumping the whole payload into the scrollback. The full content is still what gets sent; this is just display polish.

**Markdown stripping in final responses.** The CLI strips the most verbose markdown fences and `**bold**` / `*italic*` wrappers from *final* agent replies so they render as readable terminal prose rather than raw source. Code blocks and lists are preserved. This does not affect gateway platforms or tool results — they keep their markdown for native rendering.

## Slash Commands

Type `/` to see the autocomplete dropdown. Hermes supports a large set of CLI slash commands, dynamic skill commands, and user-defined quick commands.

Common examples:

| Command | Description |

|---------|-------------|

| `/help` | Show command help |

| `/model` | Show or change the current model |

| `/tools` | List currently available tools |

| `/skills browse` | Browse the skills hub and official optional skills |

| `/background ` | Run a prompt in a separate background session |

| `/skin` | Show or switch the active CLI skin |

| `/voice on` | Enable CLI voice mode (press `Ctrl+B` to record) |

| `/voice tts` | Toggle spoken playback for Hermes replies |

| `/reasoning high` | Increase reasoning effort |

| `/title My Session` | Name the current session |

For the full built-in CLI and messaging lists, see [Slash Commands Reference](../reference/slash-commands.md).

For setup, providers, silence tuning, and messaging/Discord voice usage, see [Voice Mode](features/voice-mode.md).

:::tip

Commands are case-insensitive — `/HELP` works the same as `/help`. Installed skills also become slash commands automatically.

:::

## Quick Commands

You can define custom commands that run shell commands instantly without invoking the LLM. These work in both the CLI and messaging platforms (Telegram, Discord, etc.).

```yaml

# ~/.hermes/config.yaml

quick_commands:

status:

type: exec

command: systemctl status hermes-agent

gpu:

type: exec

command: nvidia-smi --query-gpu=utilization.gpu,memory.used --format=csv,noheader

restart:

type: alias

target: /gateway restart

```

Then type `/status`, `/gpu`, or `/restart` in any chat. See the [Configuration guide](/docs/user-guide/configuration#quick-commands) for more examples.

## Preloading Skills at Launch

If you already know which skills you want active for the session, pass them at launch time:

```bash

hermes -s hermes-agent-dev,github-auth

hermes chat -s github-pr-workflow -s github-auth

```

Hermes loads each named skill into the session prompt before the first turn. The same flag works in interactive mode and single-query mode.

## Skill Slash Commands

Every installed skill in `~/.hermes/skills/` is automatically registered as a slash command. The skill name becomes the command:

```

/gif-search funny cats

/axolotl help me fine-tune Llama 3 on my dataset

/github-pr-workflow create a PR for the auth refactor

# Just the skill name loads it and lets the agent ask what you need:

/excalidraw

```

## Personalities

Set a predefined personality to change the agent's tone:

```

/personality pirate

/personality kawaii

/personality concise

```

Built-in personalities include: `helpful`, `concise`, `technical`, `creative`, `teacher`, `kawaii`, `catgirl`, `pirate`, `shakespeare`, `surfer`, `noir`, `uwu`, `philosopher`, `hype`.

You can also define custom personalities in `~/.hermes/config.yaml`:

```yaml

personalities:

helpful: "You are a helpful, friendly AI assistant."

kawaii: "You are a kawaii assistant! Use cute expressions..."

pirate: "Arrr! Ye be talkin' to Captain Hermes..."

# Add your own!

```

## Multi-line Input

There are two ways to enter multi-line messages:

1. **`Alt+Enter` or `Ctrl+J`** — inserts a new line

2. **Backslash continuation** — end a line with `\` to continue:

```

❯ Write a function that:\

1. Takes a list of numbers\

2. Returns the sum

```

:::info

Pasting multi-line text is supported — use `Alt+Enter` or `Ctrl+J` to insert newlines, or simply paste content directly.

:::

## Interrupting the Agent

You can interrupt the agent at any point:

- **Type a new message + Enter** while the agent is working — it interrupts and processes your new instructions

- **`Ctrl+C`** — interrupt the current operation (press twice within 2s to force exit)

- In-progress terminal commands are killed immediately (SIGTERM, then SIGKILL after 1s)

- Multiple messages typed during interrupt are combined into one prompt

### Busy Input Mode

The `display.busy_input_mode` config key controls what happens when you press Enter while the agent is working:

| Mode | Behavior |

|------|----------|

| `"interrupt"` (default) | Your message interrupts the current operation and is processed immediately |

| `"queue"` | Your message is silently queued and sent as the next turn after the agent finishes |

| `"steer"` | Your message is injected into the current run via `/steer`, arriving at the agent after the next tool call — no interrupt, no new turn |

```yaml

# ~/.hermes/config.yaml

display:

busy_input_mode: "steer" # or "queue" or "interrupt" (default)

```

`"queue"` mode is useful when you want to prepare follow-up messages without accidentally canceling in-flight work. `"steer"` mode is useful when you want to redirect the agent mid-task without interrupting — e.g. "actually, also check the tests" while it's still editing code. Unknown values fall back to `"interrupt"`.

`"steer"` has two automatic fallbacks: if the agent hasn't started yet, or if images are attached, the message falls back to `"queue"` behavior so nothing is lost.

You can also change it inside the CLI:

```text

/busy queue

/busy steer

/busy interrupt

/busy status

```

:::tip First-touch hint

The very first time you press Enter while Hermes is working, Hermes prints a one-line reminder explaining the `/busy` knob (`"(tip) Your message interrupted the current run…"`). It only fires once per install — a flag in `config.yaml` under `onboarding.seen.busy_input_prompt` latches it. Delete that key to see the tip again.

:::

### Suspending to Background

On Unix systems, press **`Ctrl+Z`** to suspend Hermes to the background — just like any terminal process. The shell prints a confirmation:

```

Hermes Agent has been suspended. Run `fg` to bring Hermes Agent back.

```

Type `fg` in your shell to resume the session exactly where you left off. This is not supported on Windows.

## Tool Progress Display

The CLI shows animated feedback as the agent works:

**Thinking animation** (during API calls):

```

◜ (。•́︿•̀。) pondering... (1.2s)

◠ (⊙_⊙) contemplating... (2.4s)

✧٩(ˊᗜˋ*)و✧ got it! (3.1s)

```

**Tool execution feed:**

```

┊ 💻 terminal `ls -la` (0.3s)

┊ 🔍 web_search (1.2s)

┊ 📄 web_extract (2.1s)

```

Cycle through display modes with `/verbose`: `off → new → all → verbose`. This command can also be enabled for messaging platforms — see [configuration](/docs/user-guide/configuration#display-settings).

### Tool Preview Length

The `display.tool_preview_length` config key controls the maximum number of characters shown in tool call preview lines (e.g. file paths, terminal commands). The default is `0`, which means no limit — full paths and commands are shown.

```yaml

# ~/.hermes/config.yaml

display:

tool_preview_length: 80 # Truncate tool previews to 80 chars (0 = no limit)

```

This is useful on narrow terminals or when tool arguments contain very long file paths.

## Session Management

### Resuming Sessions

When you exit a CLI session, a resume command is printed:

```

Resume this session with:

hermes --resume 20260225_143052_a1b2c3

Session: 20260225_143052_a1b2c3

Duration: 12m 34s

Messages: 28 (5 user, 18 tool calls)

```

Resume options:

```bash

hermes --continue # Resume the most recent CLI session

hermes -c # Short form

hermes -c "my project" # Resume a named session (latest in lineage)

hermes --resume 20260225_143052_a1b2c3 # Resume a specific session by ID

hermes --resume "refactoring auth" # Resume by title

hermes -r 20260225_143052_a1b2c3 # Short form

```

Resuming restores the full conversation history from SQLite. The agent sees all previous messages, tool calls, and responses — just as if you never left.

Use `/title My Session Name` inside a chat to name the current session, or `hermes sessions rename ` from the command line. Use `hermes sessions list` to browse past sessions.

### Session Storage

CLI sessions are stored in Hermes's SQLite state database under `~/.hermes/state.db`. The database keeps:

- session metadata (ID, title, timestamps, token counters)

- message history

- lineage across compressed/resumed sessions

- full-text search indexes used by `session_search`

Some messaging adapters also keep per-platform transcript files alongside the database, but the CLI itself resumes from the SQLite session store.

### Context Compression

Long conversations are automatically summarized when approaching context limits:

```yaml

# In ~/.hermes/config.yaml

compression:

enabled: true

threshold: 0.50 # Compress at 50% of context limit by default

# Summarization model configured under auxiliary:

auxiliary:

compression:

model: "google/gemini-3-flash-preview" # Model used for summarization

```

When compression triggers, middle turns are summarized while the first 3 and last 20 turns are always preserved.

## Background Sessions

Run a prompt in a separate background session while continuing to use the CLI for other work:

```

/background Analyze the logs in /var/log and summarize any errors from today

```

Hermes immediately confirms the task and gives you back the prompt:

```

🔄 Background task #1 started: "Analyze the logs in /var/log and summarize..."

Task ID: bg_143022_a1b2c3

```

### How It Works

Each `/background` prompt spawns a **completely separate agent session** in a daemon thread:

- **Isolated conversation** — the background agent has no knowledge of your current session's history. It receives only the prompt you provide.

- **Same configuration** — the background agent inherits your model, provider, toolsets, reasoning settings, and fallback model from the current session.

- **Non-blocking** — your foreground session stays fully interactive. You can chat, run commands, or even start more background tasks.

- **Multiple tasks** — you can run several background tasks simultaneously. Each gets a numbered ID.

### Results

When a background task finishes, the result appears as a panel in your terminal:

```

╭─ ⚕ Hermes (background #1) ──────────────────────────────────╮

│ Found 3 errors in syslog from today: │

│ 1. OOM killer invoked at 03:22 — killed process nginx │

│ 2. Disk I/O error on /dev/sda1 at 07:15 │

│ 3. Failed SSH login attempts from 192.168.1.50 at 14:30 │

╰──────────────────────────────────────────────────────────────╯

```

If the task fails, you'll see an error notification instead. If `display.bell_on_complete` is enabled in your config, the terminal bell rings when the task finishes.

### Use Cases

- **Long-running research** — "/background research the latest developments in quantum error correction" while you work on code

- **File processing** — "/background analyze all Python files in this repo and list any security issues" while you continue a conversation

- **Parallel investigations** — start multiple background tasks to explore different angles simultaneously

:::info

Background sessions do not appear in your main conversation history. They are standalone sessions with their own task ID (e.g., `bg_143022_a1b2c3`).

:::

## Quiet Mode

By default, the CLI runs in quiet mode which:

- Suppresses verbose logging from tools

- Enables kawaii-style animated feedback

- Keeps output clean and user-friendly

For debug output:

```bash

hermes chat --verbose

```

---

<!-- source: website/docs/user-guide/tui.md -->

# TUI

# TUI

The TUI is the modern front-end for Hermes — a terminal UI backed by the same Python runtime as the [Classic CLI](cli.md). Same agent, same sessions, same slash commands; a cleaner, more responsive surface for interacting with them.

It's the recommended way to run Hermes interactively.

## Launch

```bash

# Launch the TUI

hermes --tui

# Resume the latest TUI session (falls back to the latest classic session)

hermes --tui -c

hermes --tui --continue

# Resume a specific session by ID or title

hermes --tui -r 20260409_000000_aa11bb

hermes --tui --resume "my t0p session"

# Run source directly — skips the prebuild step (for TUI contributors)

hermes --tui --dev

```

You can also enable it via env var:

```bash

export HERMES_TUI=1

hermes # now uses the TUI

hermes chat # same

```

The classic CLI remains available as the default. Anything documented in [CLI Interface](cli.md) — slash commands, quick commands, skill preloading, personalities, multi-line input, interrupts — works in the TUI identically.

## Why the TUI

- **Instant first frame** — the banner paints before the app finishes loading, so the terminal never feels frozen while Hermes is starting.

- **Non-blocking input** — type and queue messages before the session is ready. Your first prompt sends the moment the agent comes online.

- **Rich overlays** — model picker, session picker, approval and clarification prompts all render as modal panels rather than inline flows.

- **Live session panel** — tools and skills fill in progressively as they initialize.

- **Mouse-friendly selection** — drag to highlight with a uniform background instead of SGR inverse. Copy with your terminal's normal copy gesture.

- **Alternate-screen rendering** — differential updates mean no flicker when streaming, no scrollback clutter after you quit.

- **Composer affordances** — inline paste-collapse for long snippets, `Cmd+V` / `Ctrl+V` text paste with clipboard-image fallback, bracketed-paste safety, and image/file-path attachment normalization.

Same [skins](features/skins.md) and [personalities](features/personality.md) apply. Switch mid-session with `/skin ares`, `/personality pirate`, and the UI repaints live. See [Skins & Themes](features/skins.md) for the full list of customizable keys and which ones apply to classic vs TUI — the TUI honors the banner palette, UI colors, prompt glyph/color, session display, completion menu, selection bg, `tool_prefix`, and `help_header`.

## Requirements

- **Node.js** ≥ 20 — the TUI runs as a subprocess launched from the Python CLI. `hermes doctor` verifies this.

- **TTY** — like the classic CLI, piping stdin or running in non-interactive environments falls back to single-query mode.

On first launch Hermes installs the TUI's Node dependencies into `ui-tui/node_modules` (one-time, a few seconds). Subsequent launches are fast. If you pull a new Hermes version, the TUI bundle is rebuilt automatically when sources are newer than the dist.

### External prebuild

Distributions that ship a prebuilt bundle (Nix, system packages) can point Hermes at it:

```bash

export HERMES_TUI_DIR=/path/to/prebuilt/ui-tui

hermes --tui

```

The directory must contain `dist/entry.js` and an up-to-date `node_modules`.

## Keybindings

Keybindings match the [Classic CLI](cli.md#keybindings) exactly. The only behavioral differences:

- **Mouse drag** highlights text with a uniform selection background.

- **`Cmd+V` / `Ctrl+V`** first tries normal text paste, then falls back to OSC52/native clipboard reads, and finally image attach when the clipboard or pasted payload resolves to an image.

- **`/terminal-setup`** installs local VS Code / Cursor / Windsurf terminal bindings for better `Cmd+Enter` and undo/redo parity on macOS.

- **Slash autocompletion** opens as a floating panel with descriptions, not an inline dropdown.

- **`Ctrl+X`** — when a queued message is highlighted (sent while the agent was still running), delete it from the queue. **`Esc`** cancels editing and unhighlights without deleting.

- **`Ctrl+G` / `Ctrl+X Ctrl+E`** — open the current input buffer in `$EDITOR` for multi-line / long-prompt composition; save-and-exit sends the contents back as the prompt.

## Slash commands

All slash commands work unchanged. A few are TUI-owned — they produce richer output or render as overlays rather than inline panels:

| Command | TUI behavior |

|---------|--------------|

| `/help` | Overlay with categorized commands, arrow-key navigable |

| `/sessions` | Modal session picker — preview, title, token totals, resume inline |

| `/model` | Modal model picker grouped by provider, with cost hints |

| `/skin` | Live preview — theme change applies as you browse |

| `/details` | Toggle verbose tool-call details (global or per-section) |

| `/usage` | Rich token / cost / context panel |

| `/agents` (alias `/tasks`) | Observability overlay — live subagent tree with kill/pause controls, per-branch cost / token / file rollups, turn-by-turn history |

| `/reload` | Re-reads `~/.hermes/.env` into the running TUI process so newly added API keys take effect without a restart |

| `/mouse` | Toggle mouse tracking on/off at runtime (also persists to `display.mouse_tracking` in `config.yaml`) |

Every other slash command (including installed skills, quick commands, and personality toggles) works identically to the classic CLI. See [Slash Commands Reference](../reference/slash-commands.md).

## LaTeX math rendering

The TUI's markdown pipeline renders LaTeX math inline: `$E = mc^2$` and `$$\frac{a}{b}$$` render as Unicode-formatted math instead of the raw TeX source. Works for inline and block math; unsupported syntax falls back to showing the literal TeX wrapped in a code span so it remains copyable.

This is always-on — nothing to configure. Classic CLI keeps the raw TeX.

## Light-terminal detection

The TUI auto-detects light terminals and swaps to the light theme accordingly. Detection works in three layers:

1. `HERMES_TUI_THEME` env var — highest priority. Values: `light`, `dark`, or a raw 6-char background hex (e.g. `ffffff`, `1a1a2e`).

2. `COLORFGBG` env var — the classic "what's my background color?" hint used by xterm-derived terminals.

3. Terminal background probe via OSC 11 — works on modern terminals (Ghostty, Warp, iTerm2, WezTerm, Kitty) that don't set `COLORFGBG`.

If you want the light theme permanently regardless of terminal:

```bash

export HERMES_TUI_THEME=light

```

## Busy indicator styles

The status-bar FaceTicker is pluggable — the default rotates Hermes' kawaii face palette every 2.5 seconds during agent work. Pick a different style (or `none` for a minimal dot) via config:

```yaml

display:

busy_indicator:

style: kawaii # kawaii | minimal | dots | wings | none

```

Styles ship with matched glyph widths so the rest of the status bar doesn't jitter on rotation.

## Auto-resume

By default, `hermes --tui` starts a fresh session each launch. To re-attach to the most recent TUI session automatically (useful when your terminal or SSH connection drops unexpectedly), opt in:

```bash

export HERMES_TUI_RESUME=1 # most-recent TUI session

# or:

export HERMES_TUI_RESUME=<session-id> # specific session

```

Unset the variable or pass `--resume <id>` explicitly to override on a per-launch basis.

## Status line

The TUI's status line tracks agent state in real time:

| Status | Meaning |

|--------|---------|

| `starting agent…` | Session ID is live; tools and skills still coming online. You can type — messages queue and send when ready. |

| `ready` | Agent is idle, accepting input. |

| `thinking…` / `running…` | Agent is reasoning or running a tool. |

| `interrupted` | Current turn was cancelled; press Enter to send again. |

| `forging session…` / `resuming…` | Initial connect or `--resume` handshake. |

The per-skin status-bar colors and thresholds are shared with the classic CLI — see [Skins](features/skins.md) for customization.

The status line also shows:

- **Working directory with git branch** — `~/projects/hermes-agent (docs/two-week-gap-sweep)`. The branch suffix updates when you `git checkout` in a side terminal (mtime-cached) so the TUI reflects your actual active branch, not whatever it was at launch.

- **Per-prompt elapsed time** — `⏱ 12s/3m 45s` while the turn is running (live), frozen to `⏲ 32s / 3m 45s` after the turn completes. First number is time since last user message; second is total session duration. Resets on every new prompt.

## Configuration

The TUI respects all standard Hermes config: `~/.hermes/config.yaml`, profiles, personalities, skins, quick commands, credential pools, memory providers, tool/skill enablement. No TUI-specific config file exists.

A handful of keys tune the TUI surface specifically:

```yaml

display:

skin: default # any built-in or custom skin

personality: helpful

details_mode: collapsed # hidden | collapsed | expanded — global accordion default

sections: # optional: per-section overrides (any subset)

thinking: expanded # always open

tools: expanded # always open

activity: collapsed # opt back IN to the activity panel (hidden by default)

mouse_tracking: true # disable if your terminal conflicts with mouse reporting

```

Runtime toggles:

- `/details [hidden|collapsed|expanded|cycle]` — set the global mode

- `/details <section> [hidden|collapsed|expanded|reset]` — override one section

(sections: `thinking`, `tools`, `subagents`, `activity`)

**Default visibility**

The TUI ships with opinionated per-section defaults that stream the turn as

a live transcript instead of a wall of chevrons:

- `thinking` — **expanded**. Reasoning streams inline as the model emits it.

- `tools` — **expanded**. Tool calls and their results render open.

- `subagents` — falls through to the global `details_mode` (collapsed under

chevron by default — stays quiet until a delegation actually happens).

- `activity` — **hidden**. Ambient meta (gateway hints, terminal-parity

nudges, background notifications) is noise for most day-to-day use. Tool

failures still render inline on the failing tool row; ambient

errors/warnings surface via a floating-alert backstop when every panel

is hidden.

Per-section overrides take precedence over both the section default and the

global `details_mode`. To reshape the layout:

- `display.sections.thinking: collapsed` — put thinking back under a chevron

- `display.sections.tools: collapsed` — put tool calls back under a chevron

- `display.sections.activity: collapsed` — opt the activity panel back in

- `/details <section> <mode>` at runtime

Anything set explicitly in `display.sections` wins over the defaults, so

existing configs keep working unchanged.

## Sessions

Sessions are shared between the TUI and the classic CLI — both write to the same `~/.hermes/state.db`. You can start a session in one, resume in the other. The session picker surfaces sessions from both sources, with a source tag.

See [Sessions](sessions.md) for lifecycle, search, compression, and export.

## Reverting to the classic CLI

Launching `hermes` (without `--tui`) stays on the classic CLI. To make a machine prefer the TUI, set `HERMES_TUI=1` in your shell profile. To go back, unset it.

If the TUI fails to launch (no Node, missing bundle, TTY issue), Hermes prints a diagnostic and falls back — rather than leaving you stuck.

## See also

- [CLI Interface](cli.md) — full slash command and keybinding reference (shared)

- [Sessions](sessions.md) — resume, branch, and history

- [Skins & Themes](features/skins.md) — theme the banner, status bar, and overlays

- [Voice Mode](features/voice-mode.md) — works in both interfaces

- [Configuration](configuration.md) — all config keys

---

<!-- source: website/docs/user-guide/configuration.md -->

# Configuration

# Configuration

All settings are stored in the `~/.hermes/` directory for easy access.

## Directory Structure

```text

~/.hermes/

├── config.yaml # Settings (model, terminal, TTS, compression, etc.)

├── .env # API keys and secrets

├── auth.json # OAuth provider credentials (Nous Portal, etc.)

├── SOUL.md # Primary agent identity (slot #1 in system prompt)

├── memories/ # Persistent memory (MEMORY.md, USER.md)

├── skills/ # Agent-created skills (managed via skill_manage tool)

├── cron/ # Scheduled jobs

├── sessions/ # Gateway sessions

└── logs/ # Logs (errors.log, gateway.log — secrets auto-redacted)

```

## Managing Configuration

```bash

hermes config # View current configuration

hermes config edit # Open config.yaml in your editor

hermes config set KEY VAL # Set a specific value

hermes config check # Check for missing options (after updates)

hermes config migrate # Interactively add missing options

# Examples:

hermes config set model anthropic/claude-opus-4

hermes config set terminal.backend docker

hermes config set OPENROUTER_API_KEY sk-or-... # Saves to .env

```

:::tip

The `hermes config set` command automatically routes values to the right file — API keys are saved to `.env`, everything else to `config.yaml`.

:::

## Configuration Precedence

Settings are resolved in this order (highest priority first):

1. **CLI arguments** — e.g., `hermes chat --model anthropic/claude-sonnet-4` (per-invocation override)

2. **`~/.hermes/config.yaml`** — the primary config file for all non-secret settings

3. **`~/.hermes/.env`** — fallback for env vars; **required** for secrets (API keys, tokens, passwords)

4. **Built-in defaults** — hardcoded safe defaults when nothing else is set

:::info Rule of Thumb

Secrets (API keys, bot tokens, passwords) go in `.env`. Everything else (model, terminal backend, compression settings, memory limits, toolsets) goes in `config.yaml`. When both are set, `config.yaml` wins for non-secret settings.

:::

## Environment Variable Substitution

You can reference environment variables in `config.yaml` using `${VAR_NAME}` syntax:

```yaml

auxiliary:

vision:

api_key: ${GOOGLE_API_KEY}

base_url: ${CUSTOM_VISION_URL}

delegation:

api_key: ${DELEGATION_KEY}

```

Multiple references in a single value work: `url: "${HOST}:${PORT}"`. If a referenced variable is not set, the placeholder is kept verbatim (`${UNDEFINED_VAR}` stays as-is). Only the `${VAR}` syntax is supported — bare `$VAR` is not expanded.

For AI provider setup (OpenRouter, Anthropic, Copilot, custom endpoints, self-hosted LLMs, fallback models, etc.), see [AI Providers](/docs/integrations/providers).

### Provider Timeouts

You can set `providers.<id>.request_timeout_seconds` for a provider-wide request timeout, plus `providers.<id>.models.<model>.timeout_seconds` for a model-specific override. Applies to the primary turn client on every transport (OpenAI-wire, native Anthropic, Anthropic-compatible), the fallback chain, rebuilds after credential rotation, and (for OpenAI-wire) the per-request timeout kwarg — so the configured value wins over the legacy `HERMES_API_TIMEOUT` env var.

You can also set `providers.<id>.stale_timeout_seconds` for the non-streaming stale-call detector, plus `providers.<id>.models.<model>.stale_timeout_seconds` for a model-specific override. This wins over the legacy `HERMES_API_CALL_STALE_TIMEOUT` env var.

Leaving these unset keeps the legacy defaults (`HERMES_API_TIMEOUT=1800`s, `HERMES_API_CALL_STALE_TIMEOUT=300`s, native Anthropic 900s). Not currently wired for AWS Bedrock (both `bedrock_converse` and AnthropicBedrock SDK paths use boto3 with its own timeout configuration). See the commented example in [`cli-config.yaml.example`](https://github.com/NousResearch/hermes-agent/blob/main/cli-config.yaml.example).

## Terminal Backend Configuration

Hermes supports seven terminal backends. Each determines where the agent's shell commands actually execute — your local machine, a Docker container, a remote server via SSH, a Modal cloud sandbox (direct or via the Nous-managed gateway), a Daytona workspace, a Vercel Sandbox, or a Singularity/Apptainer container.

```yaml

terminal:

backend: local # local | docker | ssh | modal | daytona | vercel_sandbox | singularity

cwd: "." # Gateway/cron working directory (CLI always uses launch dir)

timeout: 180 # Per-command timeout in seconds

env_passthrough: [] # Env var names to forward to sandboxed execution (terminal + execute_code)

singularity_image: "docker://nikolaik/python-nodejs:python3.11-nodejs20" # Container image for Singularity backend

modal_image: "nikolaik/python-nodejs:python3.11-nodejs20" # Container image for Modal backend

daytona_image: "nikolaik/python-nodejs:python3.11-nodejs20" # Container image for Daytona backend

```

For cloud sandboxes such as Modal, Daytona, and Vercel Sandbox, `container_persistent: true` means Hermes will try to preserve filesystem state across sandbox recreation. It does not promise that the same live sandbox, PID space, or background processes will still be running later.

### Backend Overview

| Backend | Where commands run | Isolation | Best for |

|---------|-------------------|-----------|----------|

| **local** | Your machine directly | None | Development, personal use |

| **docker** | Single persistent Docker container (shared across session, `/new`, subagents) | Full (namespaces, cap-drop) | Safe sandboxing, CI/CD |

| **ssh** | Remote server via SSH | Network boundary | Remote dev, powerful hardware |

| **modal** | Modal cloud sandbox | Full (cloud VM) | Ephemeral cloud compute, evals |

| **daytona** | Daytona workspace | Full (cloud container) | Managed cloud dev environments |

| **vercel_sandbox** | Vercel Sandbox | Full (cloud microVM) | Cloud execution with snapshot-backed filesystem persistence |

| **singularity** | Singularity/Apptainer container | Namespaces (--containall) | HPC clusters, shared machines |

### Local Backend

The default. Commands run directly on your machine with no isolation. No special setup required.

```yaml

terminal:

backend: local

```

:::warning

The agent has the same filesystem access as your user account. Use `hermes tools` to disable tools you don't want, or switch to Docker for sandboxing.

:::

### Docker Backend

Runs commands inside a Docker container with security hardening (all capabilities dropped, no privilege escalation, PID limits).

**Single persistent container, not per-command.** Hermes starts ONE long-lived container on first use and routes every terminal, file, and `execute_code` call through `docker exec` into that same container — across sessions, `/new`, `/reset`, and `delegate_task` subagents — for the lifetime of the Hermes process. Working-directory changes, installed packages, and files in `/workspace` carry over from one tool call to the next, just like a local shell. The container is stopped and removed on shutdown. See **Container lifecycle** below for details.

```yaml

terminal:

backend: docker

docker_image: "nikolaik/python-nodejs:python3.11-nodejs20"

docker_mount_cwd_to_workspace: false # Mount launch dir into /workspace

docker_run_as_host_user: false # See "Running container as host user" below

docker_forward_env: # Env vars to forward into container

- "GITHUB_TOKEN"

docker_volumes: # Host directory mounts

- "/home/user/projects:/workspace/projects"

- "/home/user/data:/data:ro" # :ro for read-only

# Resource limits

container_cpu: 1 # CPU cores (0 = unlimited)

container_memory: 5120 # MB (0 = unlimited)

container_disk: 51200 # MB (requires overlay2 on XFS+pquota)

container_persistent: true # Persist /workspace and /root across sessions

```

**Requirements:** Docker Desktop or Docker Engine installed and running. Hermes probes `$PATH` plus common macOS install locations (`/usr/local/bin/docker`, `/opt/homebrew/bin/docker`, Docker Desktop app bundle). Podman is supported out of the box: set `HERMES_DOCKER_BINARY=podman` (or the full path) to force it when both are installed.

**Container lifecycle:** Hermes reuses a single long-lived container (`docker run -d ... sleep 2h`) for every terminal and file-tool call, across sessions, `/new`, `/reset`, and `delegate_task` subagents, for the lifetime of the Hermes process. Commands run via `docker exec` with a login shell, so working-directory changes, installed packages, and files in `/workspace` all persist from one tool call to the next. The container is stopped and removed on Hermes shutdown (or when the idle-sweep reclaims it).

Parallel subagents spawned via `delegate_task(tasks=[...])` share this one container — concurrent `cd`, env mutations, and writes to the same path will collide. If a subagent needs an isolated sandbox, it must register a per-task image override via `register_task_env_overrides()`, which RL and benchmark environments (TerminalBench2, HermesSweEnv, etc.) do automatically for their per-task Docker images.

**Security hardening:**

- `--cap-drop ALL` with only `DAC_OVERRIDE`, `CHOWN`, `FOWNER` added back

- `--security-opt no-new-privileges`

- `--pids-limit 256`

- Size-limited tmpfs for `/tmp` (512MB), `/var/tmp` (256MB), `/run` (64MB)

**Credential forwarding:** Env vars listed in `docker_forward_env` are resolved from your shell environment first, then `~/.hermes/.env`. Skills can also declare `required_environment_variables` which are merged automatically.

### SSH Backend

Runs commands on a remote server over SSH. Uses ControlMaster for connection reuse (5-minute idle keepalive). Persistent shell is enabled by default — state (cwd, env vars) survives across commands.

```yaml

terminal:

backend: ssh

persistent_shell: true # Keep a long-lived bash session (default: true)

```

**Required environment variables:**

```bash

TERMINAL_SSH_HOST=my-server.example.com

TERMINAL_SSH_USER=ubuntu

```

**Optional:**

| Variable | Default | Description |

|----------|---------|-------------|

| `TERMINAL_SSH_PORT` | `22` | SSH port |

| `TERMINAL_SSH_KEY` | (system default) | Path to SSH private key |

| `TERMINAL_SSH_PERSISTENT` | `true` | Enable persistent shell |

**How it works:** Connects at init time with `BatchMode=yes` and `StrictHostKeyChecking=accept-new`. Persistent shell keeps a single `bash -l` process alive on the remote host, communicating via temporary files. Commands that need `stdin_data` or `sudo` automatically fall back to one-shot mode.

### Modal Backend

Runs commands in a [Modal](https://modal.com) cloud sandbox. Each task gets an isolated VM with configurable CPU, memory, and disk. Filesystem can be snapshot/restored across sessions.

```yaml

terminal:

backend: modal

container_cpu: 1 # CPU cores

container_memory: 5120 # MB (5GB)

container_disk: 51200 # MB (50GB)

container_persistent: true # Snapshot/restore filesystem

```

**Required:** Either `MODAL_TOKEN_ID` + `MODAL_TOKEN_SECRET` environment variables, or a `~/.modal.toml` config file.

**Persistence:** When enabled, the sandbox filesystem is snapshotted on cleanup and restored on next session. Snapshots are tracked in `~/.hermes/modal_snapshots.json`. This preserves filesystem state, not live processes, PID space, or background jobs.

**Credential files:** Automatically mounted from `~/.hermes/` (OAuth tokens, etc.) and synced before each command.

### Daytona Backend

Runs commands in a [Daytona](https://daytona.io) managed workspace. Supports stop/resume for persistence.

```yaml

terminal:

backend: daytona

container_cpu: 1 # CPU cores

container_memory: 5120 # MB → converted to GiB

container_disk: 10240 # MB → converted to GiB (max 10 GiB)

container_persistent: true # Stop/resume instead of delete

```

**Required:** `DAYTONA_API_KEY` environment variable.

**Persistence:** When enabled, sandboxes are stopped (not deleted) on cleanup and resumed on next session. Sandbox names follow the pattern `hermes-{task_id}`.

**Disk limit:** Daytona enforces a 10 GiB maximum. Requests above this are capped with a warning.

### Vercel Sandbox Backend

Runs commands in a [Vercel Sandbox](https://vercel.com/docs/vercel-sandbox) cloud microVM. Hermes uses the normal terminal and file tool surfaces; there are no Vercel-specific model-facing tools.

```yaml

terminal:

backend: vercel_sandbox

vercel_runtime: node24 # node24 | node22 | python3.13

cwd: /vercel/sandbox # default workspace root

container_persistent: true # Snapshot/restore filesystem

container_disk: 51200 # Shared default only; custom disk is unsupported

```

**Required install:** Install the optional SDK extra:

```bash

pip install 'hermes-agent[vercel]'

```

**Required authentication:** Configure access-token auth with all three of `VERCEL_TOKEN`, `VERCEL_PROJECT_ID`, and `VERCEL_TEAM_ID`. This is the supported setup for deployments and normal long-running Hermes processes on Render, Railway, Docker, and similar hosts.

For one-off local development, Hermes also accepts short-lived Vercel OIDC tokens:

```bash

VERCEL_OIDC_TOKEN="$(vc project token <project-name>)" hermes chat

```

From a linked Vercel project directory, you can omit the project name:

```bash

VERCEL_OIDC_TOKEN="$(vc project token)" hermes chat

```

OIDC tokens are short-lived and should not be used as the documented deployment path.

**Runtime:** `terminal.vercel_runtime` supports `node24`, `node22`, and `python3.13`. If unset, Hermes defaults to `node24`.

**Persistence:** When `container_persistent: true`, Hermes snapshots the sandbox filesystem during cleanup and restores a later sandbox for the same task from that snapshot. Snapshot contents can include Hermes-synced credentials, skills, and cache files that were copied into the sandbox. This preserves filesystem state only; it does not preserve live sandbox identity, PID space, shell state, or running background processes.

**Background commands:** `terminal(background=true)` uses Hermes' generic non-local background process flow. You can spawn, poll, wait, view logs, and kill processes through the normal process tool while the sandbox is alive. Hermes does not provide native Vercel detached-process recovery after cleanup or restart.

**Disk sizing:** Vercel Sandbox does not currently support Hermes' `container_disk` resource knob. Leave `container_disk` unset or at the shared default `51200`; non-default values fail diagnostics and backend creation instead of being silently ignored.

### Singularity/Apptainer Backend

Runs commands in a [Singularity/Apptainer](https://apptainer.org) container. Designed for HPC clusters and shared machines where Docker isn't available.

```yaml

terminal:

backend: singularity

singularity_image: "docker://nikolaik/python-nodejs:python3.11-nodejs20"

container_cpu: 1 # CPU cores

container_memory: 5120 # MB

container_persistent: true # Writable overlay persists across sessions

```

**Requirements:** `apptainer` or `singularity` binary in `$PATH`.

**Image handling:** Docker URLs (`docker://...`) are automatically converted to SIF files and cached. Existing `.sif` files are used directly.

**Scratch directory:** Resolved in order: `TERMINAL_SCRATCH_DIR` → `TERMINAL_SANDBOX_DIR/singularity` → `/scratch/$USER/hermes-agent` (HPC convention) → `~/.hermes/sandboxes/singularity`.

**Isolation:** Uses `--containall --no-home` for full namespace isolation without mounting the host home directory.

### Common Terminal Backend Issues

If terminal commands fail immediately or the terminal tool is reported as disabled:

- **Local** — No special requirements. The safest default when getting started.

- **Docker** — Run `docker version` to verify Docker is working. If it fails, fix Docker or `hermes config set terminal.backend local`.

- **SSH** — Both `TERMINAL_SSH_HOST` and `TERMINAL_SSH_USER` must be set. Hermes logs a clear error if either is missing.

- **Modal** — Needs `MODAL_TOKEN_ID` env var or `~/.modal.toml`. Run `hermes doctor` to check.

- **Daytona** — Needs `DAYTONA_API_KEY`. The Daytona SDK handles server URL configuration.

- **Singularity** — Needs `apptainer` or `singularity` in `$PATH`. Common on HPC clusters.

When in doubt, set `terminal.backend` back to `local` and verify that commands run there first.

### Remote-to-Host File Sync on Teardown

For the **SSH**, **Modal**, and **Daytona** backends (anywhere the agent's working tree lives on a different machine than the host running Hermes), Hermes tracks files the agent touched inside the remote sandbox and, on session teardown / sandbox cleanup, **syncs the modified files back to the host** under `~/.hermes/cache/remote-syncs/<session-id>/`.

- Triggers on: session close, `/new`, `/reset`, gateway message timeout, `delegate_task` subagent completion when the child used a remote backend.

- Covers the whole tree the agent modified, not just files it explicitly opened. Additions, edits, and deletions are all captured.

- The remote sandbox may have been torn down by the time you go looking; the local `~/.hermes/cache/remote-syncs/…` copy is the authoritative record of what the agent changed.

- Large binary outputs (model checkpoints, raw datasets) are capped by size — the sync skips files over `file_sync_max_mb` (default `100`). Bump that if you expect bigger artifacts to come back.

```yaml

terminal:

file_sync_max_mb: 100 # default — sync files up to 100 MB each

file_sync_enabled: true # default — set false to skip the sync entirely

```

This is how you recover results from ephemeral cloud sandboxes that get destroyed after the session ends, without having to tell the agent to explicitly `scp` or `modal volume put` every artifact.

### Docker Volume Mounts

When using the Docker backend, `docker_volumes` lets you share host directories with the container. Each entry uses standard Docker `-v` syntax: `host_path:container_path[:options]`.

```yaml

terminal:

backend: docker

docker_volumes:

- "/home/user/projects:/workspace/projects" # Read-write (default)

- "/home/user/datasets:/data:ro" # Read-only

- "/home/user/.hermes/cache/documents:/output" # Gateway-visible exports

```

This is useful for:

- **Providing files** to the agent (datasets, configs, reference code)

- **Receiving files** from the agent (generated code, reports, exports)

- **Shared workspaces** where both you and the agent access the same files

If you use a messaging gateway and want the agent to send generated files via

`MEDIA:/...`, prefer a dedicated host-visible export mount such as

`/home/user/.hermes/cache/documents:/output`.

- Write files inside Docker to `/output/...`

- Emit the **host path** in `MEDIA:`, for example:

`MEDIA:/home/user/.hermes/cache/documents/report.txt`

- Do **not** emit `/workspace/...` or `/output/...` unless that exact path also

exists for the gateway process on the host

:::warning

YAML duplicate keys silently override earlier ones. If you already have a

`docker_volumes:` block, merge new mounts into the same list instead of adding

another `docker_volumes:` key later in the file.

:::

Can also be set via environment variable: `TERMINAL_DOCKER_VOLUMES='["/host:/container"]'` (JSON array).

### Docker Credential Forwarding

By default, Docker terminal sessions do not inherit arbitrary host credentials. If you need a specific token inside the container, add it to `terminal.docker_forward_env`.

```yaml

terminal:

backend: docker

docker_forward_env:

- "GITHUB_TOKEN"

- "NPM_TOKEN"

```

Hermes resolves each listed variable from your current shell first, then falls back to `~/.hermes/.env` if it was saved with `hermes config set`.

:::warning

Anything listed in `docker_forward_env` becomes visible to commands run inside the container. Only forward credentials you are comfortable exposing to the terminal session.

:::

### Running the Container as Your Host User

By default Docker containers run as `root` (UID 0). Files created inside `/workspace` or other bind-mounts end up owned by root on the host, so after a session you have to `sudo chown` them before you can edit them from your host editor. The `terminal.docker_run_as_host_user` flag fixes this:

```yaml

terminal:

backend: docker

docker_run_as_host_user: true # default: false

```

When enabled, Hermes appends `--user $(id -u):$(id -g)` to the `docker run` command so files written into bind-mounted directories (`/workspace`, `/root`, anything in `docker_volumes`) are owned by your host user, not root. The trade-off: the container can no longer `apt install` or write to root-owned paths like `/root/.npm` — use a base image whose `HOME` is owned by a non-root user (or add your required tooling at image build time) if you need both.

Leave this `false` (the default) for backwards-compatible behavior. Turn it on when your workflow is mostly "edit mounted host files" and you're tired of `sudo chown -R`.

### Optional: Mount the Launch Directory into `/workspace`

Docker sandboxes stay isolated by default. Hermes does **not** pass your current host working directory into the container unless you explicitly opt in.

Enable it in `config.yaml`:

```yaml

terminal:

backend: docker

docker_mount_cwd_to_workspace: true

```

When enabled:

- if you launch Hermes from `~/projects/my-app`, that host directory is bind-mounted to `/workspace`

- the Docker backend starts in `/workspace`

- file tools and terminal commands both see the same mounted project

When disabled, `/workspace` stays sandbox-owned unless you explicitly mount something via `docker_volumes`.

Security tradeoff:

- `false` preserves the sandbox boundary

- `true` gives the sandbox direct access to the directory you launched Hermes from

Use the opt-in only when you intentionally want the container to work on live host files.

### Persistent Shell

By default, each terminal command runs in its own subprocess — working directory, environment variables, and shell variables reset between commands. When **persistent shell** is enabled, a single long-lived bash process is kept alive across `execute()` calls so that state survives between commands.

This is most useful for the **SSH backend**, where it also eliminates per-command connection overhead. Persistent shell is **enabled by default for SSH** and disabled for the local backend.

```yaml

terminal:

persistent_shell: true # default — enables persistent shell for SSH

```

To disable:

```bash

hermes config set terminal.persistent_shell false

```

**What persists across commands:**

- Working directory (`cd /tmp` sticks for the next command)

- Exported environment variables (`export FOO=bar`)

- Shell variables (`MY_VAR=hello`)

**Precedence:**

| Level | Variable | Default |

|-------|----------|---------|

| Config | `terminal.persistent_shell` | `true` |

| SSH override | `TERMINAL_SSH_PERSISTENT` | follows config |

| Local override | `TERMINAL_LOCAL_PERSISTENT` | `false` |

Per-backend environment variables take highest precedence. If you want persistent shell on the local backend too:

```bash

export TERMINAL_LOCAL_PERSISTENT=true

```

:::note

Commands that require `stdin_data` or sudo automatically fall back to one-shot mode, since the persistent shell's stdin is already occupied by the IPC protocol.

:::

See [Code Execution](features/code-execution.md) and the [Terminal section of the README](features/tools.md) for details on each backend.

## Skill Settings

Skills can declare their own configuration settings via their SKILL.md frontmatter. These are non-secret values (paths, preferences, domain settings) stored under the `skills.config` namespace in `config.yaml`.

```yaml

skills:

config:

myplugin:

path: ~/myplugin-data # Example — each skill defines its own keys

```

**How skill settings work:**

- `hermes config migrate` scans all enabled skills, finds unconfigured settings, and offers to prompt you

- `hermes config show` displays all skill settings under "Skill Settings" with the skill they belong to

- When a skill loads, its resolved config values are injected into the skill context automatically

**Setting values manually:**

```bash

hermes config set skills.config.myplugin.path ~/myplugin-data

```

For details on declaring config settings in your own skills, see [Creating Skills — Config Settings](/docs/developer-guide/creating-skills#config-settings-configyaml).

### Guard on agent-created skill writes

When the agent uses `skill_manage` to create, edit, patch, or delete a skill, Hermes can optionally scan the new/updated content for dangerous keyword patterns (credential harvesting, obvious prompt injection, exfil instructions). The scanner is **off by default** — real agent workflows that legitimately touch `~/.ssh/` or mention `$OPENAI_API_KEY` were tripping the heuristic too often. Turn it back on if you want the scanner to prompt you before the agent's skill writes land:

```yaml

skills:

guard_agent_created: true # default: false

```

When on, any flagged `skill_manage` write surfaces as an approval prompt with the scanner's rationale. Accepted writes land; denied writes return an explanatory error to the agent.

## Memory Configuration

```yaml

memory:

memory_enabled: true

user_profile_enabled: true

memory_char_limit: 2200 # ~800 tokens

user_char_limit: 1375 # ~500 tokens

```

## File Read Safety

Controls how much content a single `read_file` call can return. Reads that exceed the limit are rejected with an error telling the agent to use `offset` and `limit` for a smaller range. This prevents a single read of a minified JS bundle or large data file from flooding the context window.

```yaml

file_read_max_chars: 100000 # default — ~25-35K tokens

```

Raise it if you're on a model with a large context window and frequently read big files. Lower it for small-context models to keep reads efficient:

```yaml

# Large context model (200K+)

file_read_max_chars: 200000

# Small local model (16K context)

file_read_max_chars: 30000

```

The agent also deduplicates file reads automatically — if the same file region is read twice and the file hasn't changed, a lightweight stub is returned instead of re-sending the content. This resets on context compression so the agent can re-read files after their content is summarized away.

## Tool Output Truncation Limits

Three related caps control how much raw output a tool can return before Hermes truncates it:

```yaml

tool_output:

max_bytes: 50000 # terminal output cap (chars)

max_lines: 2000 # read_file pagination cap

max_line_length: 2000 # per-line cap in read_file's line-numbered view

```

- **`max_bytes`** — When a `terminal` command produces more than this many characters of combined stdout/stderr, Hermes keeps the first 40% and last 60% and inserts a `[OUTPUT TRUNCATED]` notice between them. Default `50000` (≈12-15K tokens across typical tokenisers).

- **`max_lines`** — Upper bound on the `limit` parameter of a single `read_file` call. Requests above this are clamped so a single read can't flood the context window. Default `2000`.

- **`max_line_length`** — Per-line cap applied when `read_file` emits the line-numbered view. Lines longer than this are truncated to this many chars followed by `... [truncated]`. Default `2000`.

Raise the limits on models with large context windows that can afford more raw output per call. Lower them for small-context models to keep tool results compact:

```yaml

# Large context model (200K+)

tool_output:

max_bytes: 150000

max_lines: 5000

# Small local model (16K context)

tool_output:

max_bytes: 20000

max_lines: 500

```

## Global Toolset Disable

To suppress specific toolsets across the CLI and every gateway platform in one

place, list their names under `agent.disabled_toolsets`:

```yaml

agent:

disabled_toolsets:

- memory # hide memory tools + MEMORY_GUIDANCE injection

- web # no web_search / web_extract anywhere

```

This applies **after** per-platform tool config (`platform_toolsets` written by

`hermes tools`), so a toolset listed here is always removed — even if a

platform's saved config still lists it. Use this when you want a single

switch for "turn X off everywhere" rather than editing 15+ platform rows in

the `hermes tools` UI.

Leaving the list empty, or omitting the key, is a no-op.

## Git Worktree Isolation

Enable isolated git worktrees for running multiple agents in parallel on the same repo:

```yaml

worktree: true # Always create a worktree (same as hermes -w)

# worktree: false # Default — only when -w flag is passed

```

When enabled, each CLI session creates a fresh worktree under `.worktrees/` with its own branch. Agents can edit files, commit, push, and create PRs without interfering with each other. Clean worktrees are removed on exit; dirty ones are kept for manual recovery.

You can also list gitignored files to copy into worktrees via `.worktreeinclude` in your repo root:

```

# .worktreeinclude

.env

.venv/

node_modules/

```

## Context Compression

Hermes automatically compresses long conversations to stay within your model's context window. The compression summarizer is a separate LLM call — you can point it at any provider or endpoint.

All compression settings live in `config.yaml` (no environment variables).

### Full reference

```yaml

compression:

enabled: true # Toggle compression on/off

threshold: 0.50 # Compress at this % of context limit

target_ratio: 0.20 # Fraction of threshold to preserve as recent tail

protect_last_n: 20 # Min recent messages to keep uncompressed

hygiene_hard_message_limit: 400 # Gateway safety valve — see below

# The summarization model/provider is configured under auxiliary:

auxiliary:

compression:

model: "google/gemini-3-flash-preview" # Model for summarization

provider: "auto" # Provider: "auto", "openrouter", "nous", "codex", "main", etc.

base_url: null # Custom OpenAI-compatible endpoint (overrides provider)

```

:::info Legacy config migration

Older configs with `compression.summary_model`, `compression.summary_provider`, and `compression.summary_base_url` are automatically migrated to `auxiliary.compression.*` on first load (config version 17). No manual action needed.

:::

`hygiene_hard_message_limit` is a gateway-only **pre-compression safety valve**. Runaway sessions with thousands of messages can hit model context limits before the normal percent-of-context threshold fires; when message count crosses this ceiling, Hermes forces compression regardless of token usage. Default `400` — raise it for platforms where very long sessions are normal, lower it to force more aggressive compression. Editing this value on a running gateway takes effect on the next message (see below).

:::tip Gateway hot-reload of compression and context length

As of recent releases, editing `model.context_length` or any `compression.*` key in `config.yaml` on a running gateway takes effect on the next message — no gateway restart, no `/reset`, no session rotation required. The cached-agent signature includes these keys, so the gateway transparently rebuilds the agent when it sees a change. API keys and tool/skill config still require the usual reload paths.

:::

### Common setups

**Default (auto-detect) — no configuration needed:**

```yaml

compression:

enabled: true

threshold: 0.50

```

Uses your main provider and main model. Override per-task (e.g. `auxiliary.compression.provider: openrouter` + `model: google/gemini-2.5-flash`) if you want compression on a cheaper model than your main chat model.

**Force a specific provider** (OAuth or API-key based):

```yaml

auxiliary:

compression:

provider: nous

model: gemini-3-flash

```

Works with any provider: `nous`, `openrouter`, `codex`, `anthropic`, `main`, etc.

**Custom endpoint** (self-hosted, Ollama, zai, DeepSeek, etc.):

```yaml

auxiliary:

compression:

model: glm-4.7

base_url: https://api.z.ai/api/coding/paas/v4

```

Points at a custom OpenAI-compatible endpoint. Uses `OPENAI_API_KEY` for auth.

### How the three knobs interact

| `auxiliary.compression.provider` | `auxiliary.compression.base_url` | Result |

|---------------------|---------------------|--------|

| `auto` (default) | not set | Auto-detect best available provider |

| `nous` / `openrouter` / etc. | not set | Force that provider, use its auth |

| any | set | Use the custom endpoint directly (provider ignored) |

:::warning Summary model context length requirement

The summary model **must** have a context window at least as large as your main agent model's. The compressor sends the full middle section of the conversation to the summary model — if that model's context window is smaller than the main model's, the summarization call will fail with a context length error. When this happens, the middle turns are **dropped without a summary**, losing conversation context silently. If you override the model, verify its context length meets or exceeds your main model's.

:::

## Context Engine

The context engine controls how conversations are managed when approaching the model's token limit. The built-in `compressor` engine uses lossy summarization (see [Context Compression](/docs/developer-guide/context-compression-and-caching)). Plugin engines can replace it with alternative strategies.

```yaml

context:

engine: "compressor" # default — built-in lossy summarization

```

To use a plugin engine (e.g., LCM for lossless context management):

```yaml

context:

engine: "lcm" # must match the plugin's name

```

Plugin engines are **never auto-activated** — you must explicitly set `context.engine` to the plugin name. Available engines can be browsed and selected via `hermes plugins` → Provider Plugins → Context Engine.

See [Memory Providers](/docs/user-guide/features/memory-providers) for the analogous single-select system for memory plugins.

## Iteration Budget Pressure

When the agent is working on a complex task with many tool calls, it can burn through its iteration budget (default: 90 turns) without realizing it's running low. Budget pressure automatically warns the model as it approaches the limit:

| Threshold | Level | What the model sees |

|-----------|-------|---------------------|

| **70%** | Caution | `[BUDGET: 63/90. 27 iterations left. Start consolidating.]` |

| **90%** | Warning | `[BUDGET WARNING: 81/90. Only 9 left. Respond NOW.]` |

Warnings are injected into the last tool result's JSON (as a `_budget_warning` field) rather than as separate messages — this preserves prompt caching and doesn't disrupt the conversation structure.

```yaml

agent:

max_turns: 90 # Max iterations per conversation turn (default: 90)

api_max_retries: 2 # Retries per provider before fallback engages (default: 2)

```

Budget pressure is enabled by default. The agent sees warnings naturally as part of tool results, encouraging it to consolidate its work and deliver a response before running out of iterations.

When the iteration budget is fully exhausted, the CLI shows a notification to the user: `⚠ Iteration budget reached (90/90) — response may be incomplete`. If the budget runs out during active work, the agent generates a summary of what was accomplished before stopping.

`agent.api_max_retries` controls how many times Hermes retries a provider API call on transient errors (rate limits, connection drops, 5xx) **before** fallback-provider switching engages. The default is `2` — three attempts total, matching the OpenAI SDK default. If you have [fallback providers](/docs/user-guide/features/fallback-providers) configured and want to fail over faster, drop this to `0` so the first transient error on your primary immediately hands off to the fallback instead of churning retries against the flaky endpoint.

### API Timeouts

Hermes has separate timeout layers for streaming, plus a stale detector for non-streaming calls. The stale detectors auto-adjust for local providers only when you leave them at their implicit defaults.

| Timeout | Default | Local providers | Config / env |

|---------|---------|----------------|--------------|

| Socket read timeout | 120s | Auto-raised to 1800s | `HERMES_STREAM_READ_TIMEOUT` |

| Stale stream detection | 180s | Auto-disabled | `HERMES_STREAM_STALE_TIMEOUT` |

| Stale non-stream detection | 300s | Auto-disabled when left implicit | `providers.<id>.stale_timeout_seconds` or `HERMES_API_CALL_STALE_TIMEOUT` |

| API call (non-streaming) | 1800s | Unchanged | `providers.<id>.request_timeout_seconds` / `timeout_seconds` or `HERMES_API_TIMEOUT` |

The **socket read timeout** controls how long httpx waits for the next chunk of data from the provider. Local LLMs can take minutes for prefill on large contexts before producing the first token, so Hermes raises this to 30 minutes when it detects a local endpoint. If you explicitly set `HERMES_STREAM_READ_TIMEOUT`, that value is always used regardless of endpoint detection.

The **stale stream detection** kills connections that receive SSE keep-alive pings but no actual content. This is disabled entirely for local providers since they don't send keep-alive pings during prefill.

The **stale non-stream detection** kills non-streaming calls that produce no response for too long. By default Hermes disables this on local endpoints to avoid false positives during long prefills. If you explicitly set `providers.<id>.stale_timeout_seconds`, `providers.<id>.models.<model>.stale_timeout_seconds`, or `HERMES_API_CALL_STALE_TIMEOUT`, that explicit value is honored even on local endpoints.

## Context Pressure Warnings

Separate from iteration budget pressure, context pressure tracks how close the conversation is to the **compaction threshold** — the point where context compression fires to summarize older messages. This helps both you and the agent understand when the conversation is getting long.

| Progress | Level | What happens |

|----------|-------|-------------|

| **≥ 60%** to threshold | Info | CLI shows a cyan progress bar; gateway sends an informational notice |

| **≥ 85%** to threshold | Warning | CLI shows a bold yellow bar; gateway warns compaction is imminent |

In the CLI, context pressure appears as a progress bar in the tool output feed:

```

◐ context ████████████░░░░░░░░ 62% to compaction 48k threshold (50%) · approaching compaction

```

On messaging platforms, a plain-text notification is sent:

```

◐ Context: ████████████░░░░░░░░ 62% to compaction (threshold: 50% of window).

```

If auto-compression is disabled, the warning tells you context may be truncated instead.

Context pressure is automatic — no configuration needed. It fires purely as a user-facing notification and does not modify the message stream or inject anything into the model's context.

## Credential Pool Strategies

When you have multiple API keys or OAuth tokens for the same provider, configure the rotation strategy:

```yaml

credential_pool_strategies:

openrouter: round_robin # cycle through keys evenly

anthropic: least_used # always pick the least-used key

```

Options: `fill_first` (default), `round_robin`, `least_used`, `random`. See [Credential Pools](/docs/user-guide/features/credential-pools) for full documentation.

## Auxiliary Models

Hermes uses "auxiliary" models for side tasks like image analysis, web page summarization, browser screenshot analysis, session-title generation, and context compression. By default (`auxiliary.*.provider: "auto"`), Hermes routes every auxiliary task to your **main chat model** — the same provider/model you picked in `hermes model`. You don't need to configure anything to get started, but be aware that on expensive reasoning models (Opus, MiniMax M2.7, etc.) auxiliary tasks add meaningful cost. If you want cheap-and-fast side tasks regardless of your main model, set `auxiliary.<task>.provider` and `auxiliary.<task>.model` explicitly (for example, Gemini Flash on OpenRouter for vision and web extraction).

:::note Why "auto" uses your main model

Earlier builds split aggregator users (OpenRouter, Nous Portal) onto a cheap provider-side default. That was surprising — users who paid for an aggregator subscription would see a different model handling their auxiliary traffic. `auto` now uses the main model for everyone, and per-task overrides in `config.yaml` still win (see [Full auxiliary config reference](#full-auxiliary-config-reference) below).

:::

### Configuring auxiliary models interactively

Instead of hand-editing YAML, run `hermes model` and pick **"Configure auxiliary models"** from the menu. You'll get an interactive per-task picker:

```

$ hermes model

→ Configure auxiliary models

[ ] vision currently: auto / main model

[ ] web_extract currently: auto / main model

[ ] session_search currently: openrouter / google/gemini-2.5-flash

[ ] title_generation currently: openrouter / google/gemini-3-flash-preview

[ ] compression currently: auto / main model

[ ] approval currently: auto / main model

```

Select a task, pick a provider (OAuth flows open a browser; API-key providers prompt), pick a model. The change persists to `auxiliary.<task>.*` in `config.yaml`. Same machinery as the main-model picker — no extra syntax to learn.

### Video Tutorial

<div style={{position: 'relative', width: '100%', aspectRatio: '16 / 9', marginBottom: '1.5rem'}}>

<iframe

src="https://www.youtube.com/embed/NoF-YajElIM"

title="Hermes Agent — Auxiliary Models Tutorial"

style={{position: 'absolute', top: 0, left: 0, width: '100%', height: '100%', border: 0}}

allow="accelerometer; autoplay; clipboard-write; encrypted-media; gyroscope; picture-in-picture; web-share"

allowFullScreen

/>

</div>

### The universal config pattern

Every model slot in Hermes — auxiliary tasks, compression, fallback — uses the same three knobs:

| Key | What it does | Default |

|-----|-------------|---------|

| `provider` | Which provider to use for auth and routing | `"auto"` |

| `model` | Which model to request | provider's default |

| `base_url` | Custom OpenAI-compatible endpoint (overrides provider) | not set |

When `base_url` is set, Hermes ignores the provider and calls that endpoint directly (using `api_key` or `OPENAI_API_KEY` for auth). When only `provider` is set, Hermes uses that provider's built-in auth and base URL.

Available providers for auxiliary tasks: `auto`, `main`, plus any provider in the [provider registry](/docs/reference/environment-variables) — `openrouter`, `nous`, `openai-codex`, `copilot`, `copilot-acp`, `anthropic`, `gemini`, `google-gemini-cli`, `qwen-oauth`, `zai`, `kimi-coding`, `kimi-coding-cn`, `minimax`, `minimax-cn`, `minimax-oauth`, `deepseek`, `nvidia`, `xai`, `ollama-cloud`, `alibaba`, `bedrock`, `huggingface`, `arcee`, `xiaomi`, `kilocode`, `opencode-zen`, `opencode-go`, `ai-gateway`, `azure-foundry` — or any named custom provider from your `custom_providers` list (e.g. `provider: "beans"`).

:::tip MiniMax OAuth

`minimax-oauth` logs in via browser OAuth (no API key needed). Run `hermes model` and select **MiniMax (OAuth)** to authenticate. Auxiliary tasks use `MiniMax-M2.7-highspeed` automatically. See the [MiniMax OAuth guide](../guides/minimax-oauth.md).

:::

:::warning `"main"` is for auxiliary tasks only

The `"main"` provider option means "use whatever provider my main agent uses" — it's only valid inside `auxiliary:`, `compression:`, and `fallback_model:` configs. It is **not** a valid value for your top-level `model.provider` setting. If you use a custom OpenAI-compatible endpoint, set `provider: custom` in your `model:` section. See [AI Providers](/docs/integrations/providers) for all main model provider options.

:::

### Full auxiliary config reference

```yaml

auxiliary:

# Image analysis (vision_analyze tool + browser screenshots)

vision:

provider: "auto" # "auto", "openrouter", "nous", "codex", "main", etc.

model: "" # e.g. "openai/gpt-4o", "google/gemini-2.5-flash"

base_url: "" # Custom OpenAI-compatible endpoint (overrides provider)

api_key: "" # API key for base_url (falls back to OPENAI_API_KEY)

timeout: 120 # seconds — LLM API call timeout; vision payloads need generous timeout

download_timeout: 30 # seconds — image HTTP download; increase for slow connections

# Web page summarization + browser page text extraction

web_extract:

provider: "auto"

model: "" # e.g. "google/gemini-2.5-flash"

base_url: ""

api_key: ""

timeout: 360 # seconds (6min) — per-attempt LLM summarization

# Dangerous command approval classifier

approval:

provider: "auto"

model: ""

base_url: ""

api_key: ""

timeout: 30 # seconds

# Context compression timeout (separate from compression.* config)

compression:

timeout: 120 # seconds — compression summarizes long conversations, needs more time

# Session search — summarizes past session matches

session_search:

provider: "auto"

model: ""

base_url: ""

api_key: ""

timeout: 30

max_concurrency: 3 # Limit parallel summaries to reduce request-burst 429s

extra_body: {} # Provider-specific OpenAI-compatible request fields

# Skills hub — skill matching and search

skills_hub:

provider: "auto"

model: ""

base_url: ""

api_key: ""

timeout: 30

# MCP tool dispatch

mcp:

provider: "auto"

model: ""

base_url: ""

api_key: ""

timeout: 30

```

:::tip

Each auxiliary task has a configurable `timeout` (in seconds). Defaults: vision 120s, web_extract 360s, approval 30s, compression 120s. Increase these if you use slow local models for auxiliary tasks. Vision also has a separate `download_timeout` (default 30s) for the HTTP image download — increase this for slow connections or self-hosted image servers.

:::

:::info

Context compression has its own `compression:` block for thresholds and an `auxiliary.compression:` block for model/provider settings — see [Context Compression](#context-compression) above. The fallback model uses a `fallback_model:` block — see [Fallback Model](/docs/integrations/providers#fallback-model). All three follow the same provider/model/base_url pattern.

:::

### Session Search Tuning

If you use a reasoning-heavy model for `auxiliary.session_search`, Hermes now gives you two built-in controls:

- `auxiliary.session_search.max_concurrency`: limits how many matched sessions Hermes summarizes at once

- `auxiliary.session_search.extra_body`: forwards provider-specific OpenAI-compatible request fields on the summarization calls

Example:

```yaml

auxiliary:

session_search:

provider: "main"

model: "glm-4.5-air"

timeout: 60

max_concurrency: 2

extra_body:

enable_thinking: false

```

Use `max_concurrency` when your provider rate-limits request bursts and you want `session_search` to trade some parallelism for stability.

Use `extra_body` only when your provider documents OpenAI-compatible request-body fields you want Hermes to pass through for that task. Hermes forwards the object as-is.

:::warning

`extra_body` is only effective when your provider actually supports the field you send. If the provider does not expose a native OpenAI-compatible reasoning-off flag, Hermes cannot synthesize one on its behalf.

:::

### Changing the Vision Model

To use GPT-4o instead of Gemini Flash for image analysis:

```yaml

auxiliary:

vision:

model: "openai/gpt-4o"

```

Or via environment variable (in `~/.hermes/.env`):

```bash

AUXILIARY_VISION_MODEL=openai/gpt-4o

```

### Provider Options

These options apply to **auxiliary task configs** (`auxiliary:`, `compression:`, `fallback_model:`), not to your main `model.provider` setting.

| Provider | Description | Requirements |

|----------|-------------|-------------|

| `"auto"` | Best available (default). Vision tries OpenRouter → Nous → Codex. | — |